Stop Shipping Translations to the Client: Edge-Native i18n with Astro & Cloudflare (Part 2)

Handling localized Zod validation, react-hook-form, and Astro Actions without bloating your client bundle.

Audio Deep Dive

Too busy to read? Listen to a 21-minute debate on this Architecture Deep Dive (generated by NotebookLM).

What you are about to see in this article is not a search for easy paths.

Let me be upfront (and I probably should have mentioned this in Part 1): if you have a simple site with two or three pages, two languages, and no interactive React Islands - just use Astro’s built-in i18n routing, build it to static (output: 'static'), and don’t overcomplicate your life.

But if it’s a complex marketing site and/or you are building a B2B SaaS with a dynamic dashboard, tons of forms, and UGC, where users generate data and marketing demands green LCP metrics despite heavy trackers - that’s when classic approaches break down, and our custom architecture pays back every minute invested in its maintenance.

All of this is dictated by my pragmatic love (if love can be pragmatic :)) for this stack and the desire to achieve maximum user convenience alongside premium Lighthouse metrics, Core Web Vitals, and proper SEO.

For a modern business, high website performance is a baseline condition for survival. You cannot count on intensive organic traffic and ad ROI if your architecture allows a “beautiful” React component to block the main thread for three long seconds.

At first glance, this task is woven from unsolvable contradictions - well then, let’s try to tackle it.

Spoiler alert: I already realize that fitting all aspects of SEO-readiness and dynamic database localization into this single post is impossible. So today, I will focus strictly on the technical implementation of what was promised in Part 1.

We are still building on top of the Astro EdgeKits Core foundation, but with expanded cases. I will show you everything exactly as it is implemented on this website (edgekits.dev).

The first bottleneck where the Zero-JS Astro i18n concept usually breaks down is client-side form validation. Let’s see how to master react-hook-form and Zod localization on the Edge, making them work seamlessly with Shadcn UI - all without shipping heavy JSON dictionaries or client-side translation engines to the browser.

Under the Hood: The Subscription Flow Stack

To demonstrate this architecture, we will dissect the Newsletter Subscription flow. It’s not just a single <input>; it’s a two-step State Machine (Subscribe -> Segment -> Done) that interacts with our database.

Here is the tooling we use to make it happen:

- Database: Cloudflare D1.

- ORM & Schema Validation: Drizzle (

drizzle-orm,drizzle-kit,drizzle-zod). - Server Logic: Astro Actions.

- Client State Management:

react-hook-form,@hookform/resolvers. - UI Components: Extended Shadcn UI (

FieldGroup,Field,FieldLabel,Input,Select, andMultiSelectfrom the WebDevSimplified (WDS) Shadcn Registry by Kyle Cook).

The Bottleneck: Zod i18n and Client Bundle Bloat

When you build an interactive form in React, the industry-standard reflex is to pair react-hook-form with Zod for validation.

But when you need to internationalize those validation errors (e.g., turning “Invalid email” into “Correo electrónico no válido”), the standard ecosystem pushes you toward packages like zod-i18n-map.

This is a dead end for performance.

To make it work, you have to ship the entire Zod translation dictionary to the client. Suddenly, your carefully optimized, lightweight React Island is dragging an extra 30-50KB of JSON and localization logic into the browser. The main thread chokes, TBT (Total Blocking Time) spikes, and your Web Vitals turn yellow.

We need to validate data on the client to provide instant feedback, but we cannot afford to ship the translations. How do we break this loop?

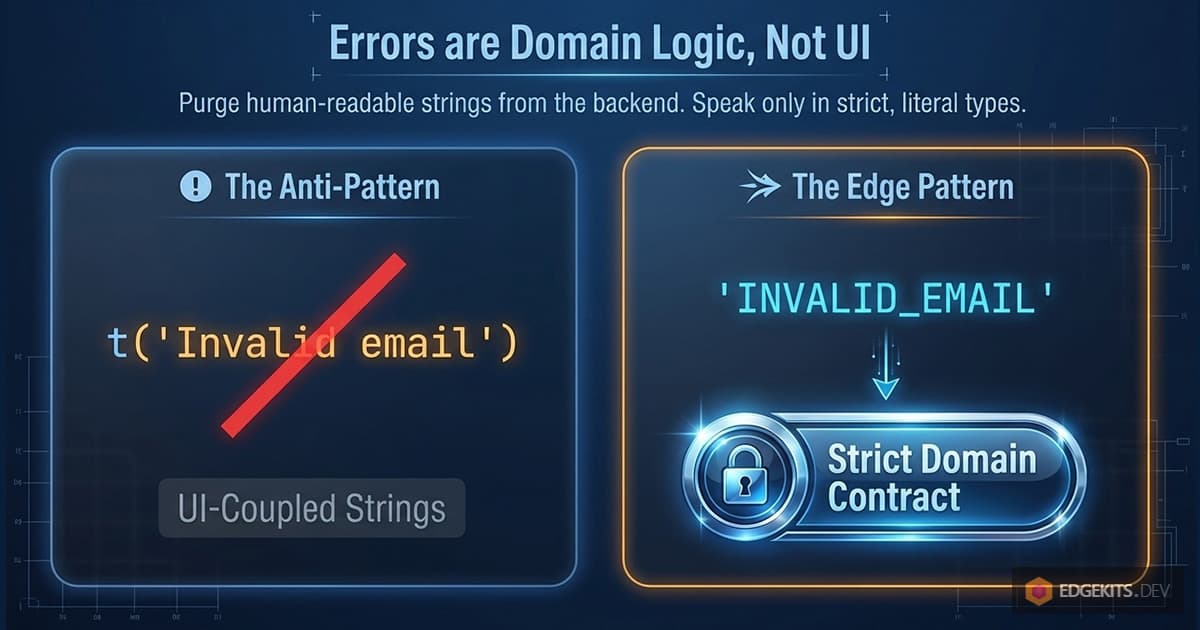

Zero-JS Validation: Error Codes as a Domain Contract

The root of the problem is treating an error as a string of text.

In a typical application, the UI, the API routes, and the domain logic all know about the t() function. Errors are translated the moment they are created. This creates a chaotic system where it is impossible to understand where an error originated, how to log it, or how to reliably change its language context.

In EdgeKits, we introduced a strict paradigm shift: An error is a part of the domain, not the UI.

Step 1: Strict Literal Types

We replaced translated strings with strict, language-agnostic literal types. We created a single, unified dictionary of error codes for the entire application.

// src/domain/messages/error-codes.ts

// Server actions/apis errors

export const SERVER_ERROR_CODES = {

INTERNAL_SERVER_ERROR: 'INTERNAL_SERVER_ERROR',

} as const

export type ServerErrorCode =

(typeof SERVER_ERROR_CODES)[keyof typeof SERVER_ERROR_CODES]

// UI / Validation Errors

export const UI_ERROR_CODES = {

// Newsletter / identity

INVALID_EMAIL: 'INVALID_EMAIL',

EMAIL_ALREADY_EXISTS: 'EMAIL_ALREADY_EXISTS',

FAILED_TO_INSERT_SUBSCRIBER: 'FAILED_TO_INSERT_SUBSCRIBER',

// Segmentation

INTERESTS_REQUIRED: 'INTERESTS_REQUIRED',

BILLING_OTHER_REQUIRED: 'BILLING_OTHER_REQUIRED',

} as const

export type UiErrorCode = (typeof UI_ERROR_CODES)[keyof typeof UI_ERROR_CODES]

// Merge for usecases where both groups are needed

export const ERROR_MESSAGE_CODES = {

...SERVER_ERROR_CODES,

...UI_ERROR_CODES,

} as const

export type ErrorMessageCode =

(typeof ERROR_MESSAGE_CODES)[keyof typeof ERROR_MESSAGE_CODES]Why this matters:

as constensures these are strict literal types, not generic strings.- We avoid TypeScript

enums, which are better for edge compatibility and serialization. - There is only one set of error codes across the entire product.

Step 2: Zod Speaks in Codes

Now, we enforce this contract at the schema level. When we define our Zod schema for the newsletter form, we don’t write human-readable messages. We map the validation failures directly to our domain codes.

// src/db/forms.ts

// In reality, this schema is wider and collects analytics data (e.g. subscription source). We are omitting those fields here for brevity and focusing on validation.

import { z } from 'zod'

import { createInsertSchema } from 'drizzle-zod'

import { subscribers } from '@/db/schema'

import { ERROR_MESSAGE_CODES } from '@/domain/messages'

const SubscriberInsertSchema = createInsertSchema(subscribers)

export const NewsletterFormSchema = SubscriberInsertSchema.pick({

email: true,

}).extend({

// Zod returns a domain code instead of a string

email: z.email({ message: ERROR_MESSAGE_CODES.INVALID_EMAIL }),

})

export type NewsletterFormData = z.infer<typeof NewsletterFormSchema>If a user enters foo@bar into the client-side form, Zod doesn’t try to figure out if the user is German or Japanese. It simply returns "INVALID_EMAIL".

The validation logic is now completely decoupled from the localization layer. The client bundle remains incredibly small because it only contains the schema rules, not the dictionaries.

But what happens when the error doesn’t come from Zod, but from the backend? This is where Astro Actions step in.

Astro Actions Error Handling & The DomainContext Pattern

So, Zod now returns "INVALID_EMAIL" instead of a human-readable string. But client-side validation is only the first line of defense. What happens when the data is valid, but the business logic fails on the backend? For example, the user submits an email that already exists in your database.

In Astro, the bridge between the client and the server is handled by Astro Actions. However, when running on Cloudflare Workers, we face a unique architectural challenge: bindings.

To access your D1 database or KV namespaces, you need the Cloudflare Env object. Passing this env object through every single service, repository, and utility function is a notorious DX nightmare that pollutes your domain logic with infrastructure details.

(A quick side note: If you are already using the shiny new Astro 6.x with the updated Cloudflare adapter, you can now import { env } from 'cloudflare:workers' directly anywhere in your server code. However, this project is built on Astro 5.x, where env is strictly injected into the request context. More importantly, regardless of the framework version, keeping infrastructure imports out of your domain logic remains a superior architectural pattern for testability and decoupling).

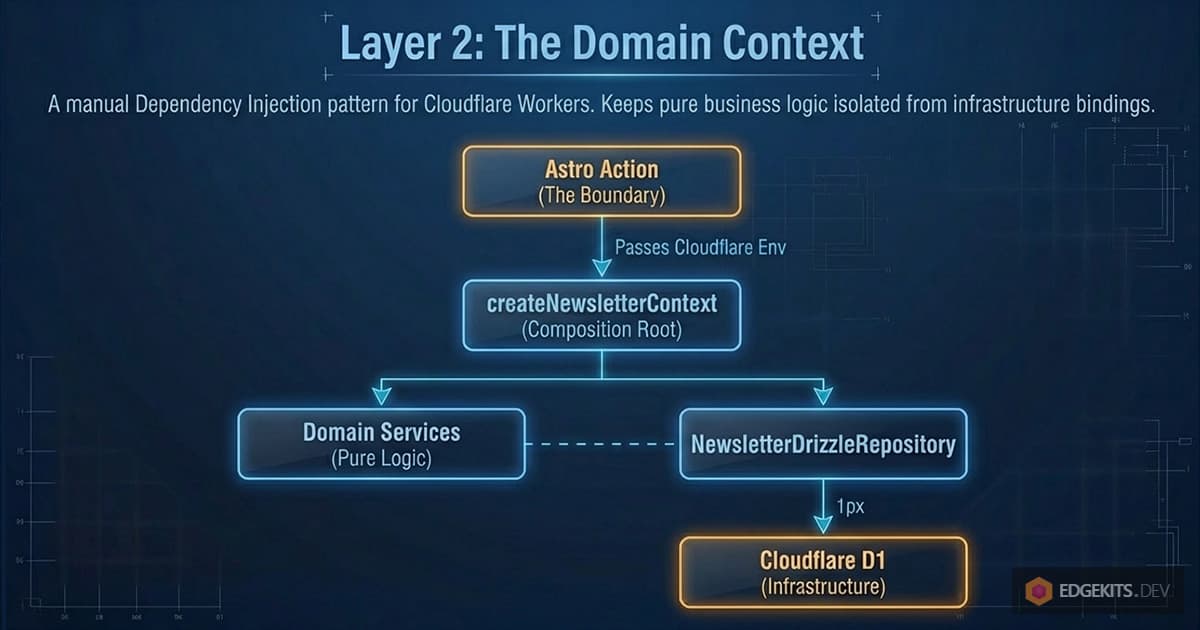

The DomainContext Solution

To keep our actions clean and our domain edge-native, we use a strict DomainContext pattern.

A DomainContext is a request-scoped composition root. It is the only place where the Cloudflare Env is used to wire up repositories and services. The Astro Action simply creates the context and delegates the work.

Here is a simplified diagram of this architecture:

graph TD

A[Astro Action] -->|Passes Env| B(createNewsletterContext)

B -->|Initializes| C[NewsletterDrizzleRepository]

B -->|Wires up| D[Domain Services]

D -.->|Uses| C

C -.->|Queries| E[(Cloudflare D1)]Let’s break down what is happening here:

- Astro Action: The starting point. This is the Astro server function that receives data from the user. It passes the environment variables (Cloudflare bindings) further down the chain.

- createNewsletterContext: The initializer. It creates the execution “context” - gathering all the necessary tools for the newsletter operations in one place.

- NewsletterDrizzleRepository: The data access layer. It uses the Drizzle ORM to translate our code into raw SQL queries.

- Domain Services: The business logic. This is the “brain” of the application that decides exactly what needs to be done with the data before saving it. It uses the repository to communicate with the database.

- Cloudflare D1: The final destination. The serverless relational SQL database on the Cloudflare platform where the data is physically stored.

The takeaway: What we have here is a classic manual Dependency Injection pattern. The business logic (Services) is completely decoupled from the database operations (Repository), and everything is cleanly wired together inside a single context the exact moment the Action is invoked.

And here is the actual implementation from our codebase:

// src/domain/newsletter/context.ts

import { NewsletterDrizzleRepository } from './repository'

import { validateContact } from './services'

export function createNewsletterContext(env: Env) {

// 1. Initialize the concrete repository with Env (D1 binding)

const repository = new NewsletterDrizzleRepository(env)

// 2. Return the public API of the domain

return {

repository,

validateContact(input: { email: string }) {

// The service knows about the repository interface, but knows nothing about Env

return validateContact(repository, input)

},

addContactToDb: async (input: any) => {

return await repository.insertContact(input)

},

}

}To complete the picture, let’s take a look at the validateContact service itself, which is invoked inside the context:

// src/domain/newsletter/services/validate-contact.ts

import { checkEmailExists } from './validation/check-email-exists'

import type { NewsletterRepository } from '../repository/interface'

export async function validateContact(

repo: NewsletterRepository,

input: { email: string },

) {

await checkEmailExists(repo, input.email)

}But where does that strict error code actually come from? Let’s go one level deeper into the checkEmailExists helper to see how the circle completes:

// src/domain/newsletter/services/validation/check-email-exists.ts

import { ERROR_MESSAGE_CODES } from '@/domain/messages/error-codes'

import type { NewsletterRepository } from '../../repository'

export async function checkEmailExists(

repo: NewsletterRepository,

email: string,

): Promise<void> {

const exists = await repo.existsByEmail(email)

if (exists) {

// We throw the strict domain code, not a localized string!

throw new Error(ERROR_MESSAGE_CODES.EMAIL_ALREADY_EXISTS)

}

}This is the core of our error contract. When the database confirms the email is taken, we don’t throw a generic Error(“Email already in use”) or trigger a translation function. We throw our strict literal type. This error bubbles up through the validateContact service, gets caught by the Astro Action, and is safely passed to the React Orchestrator without ever exposing database internals or coupling the backend to a specific UI language.

Everything here is crystal clear: the services layer knows absolutely nothing about Cloudflare, the Env object, or the D1 database. It simply accepts a strict, abstract repository interface (NewsletterRepository) and executes pure business logic.

This makes your domain 100% testable and completely independent of the underlying infrastructure. If you decide to migrate from D1 to PostgreSQL, or swap Drizzle for Prisma (or even raw SQL) tomorrow, this code won’t change by a single line.

Now, look how clean and readable the actual Astro Action becomes:

// src/actions/newsletter.ts

import { ActionError, defineAction } from 'astro:actions'

import { NewsletterActionInputSchema } from './schema'

import { createNewsletterContext } from '@/domain/newsletter/context'

import { ERROR_MESSAGE_CODES, isErrorMessageCode } from '@/domain/messages'

export const newsLetter = {

subscribe: defineAction({

input: NewsletterActionInputSchema,

handler: async (input, context) => {

// 1. Initialize the domain context using Cloudflare Env

const newsletter = createNewsletterContext(context.locals.runtime.env)

try {

// 2. Execute business logic

await newsletter.validateContact(input) // Throws if email is filthy or exists

// Enrich data with Cloudflare Geo-IP before saving

const { timezone, country, city } = context.locals.runtime.cf ?? {}

const subscriberId = await newsletter.addContactToDb({

...input,

timezone,

country,

city,

})

return subscriberId

} catch (error) {

// 3. Catch domain errors and safely escalate them to the client

if (error instanceof Error && isErrorMessageCode(error.message)) {

throw new ActionError({

message: error.message, // e.g., "EMAIL_ALREADY_EXISTS"

code: 'BAD_REQUEST',

})

}

// Fallback for unexpected system crashes

throw new ActionError({

message: ERROR_MESSAGE_CODES.INTERNAL_SERVER_ERROR,

code: 'INTERNAL_SERVER_ERROR',

})

}

},

}),

}Two important details here:

- The Input Schema: Notice that we use

NewsletterActionInputSchemainstead of directly reusing the database insert schema. Why? Because API inputs rarely match the database 1:1. The client sends alocaleand anemail, but the action enriches the payload with Cloudflare’scfobject (likecountryandcity) before passing it to the database. - The Type Guard (

isErrorMessageCode): When the domain throws an error, we need to ensure we don’t accidentally leak a raw SQL error or a stack trace to the frontend.isErrorMessageCodeis a strict TypeScript type guard that checks iferror.messageexactly matches one of our predefined codes inERROR_MESSAGE_CODES. If it doesn’t, we swallow it and return a genericINTERNAL_SERVER_ERROR.

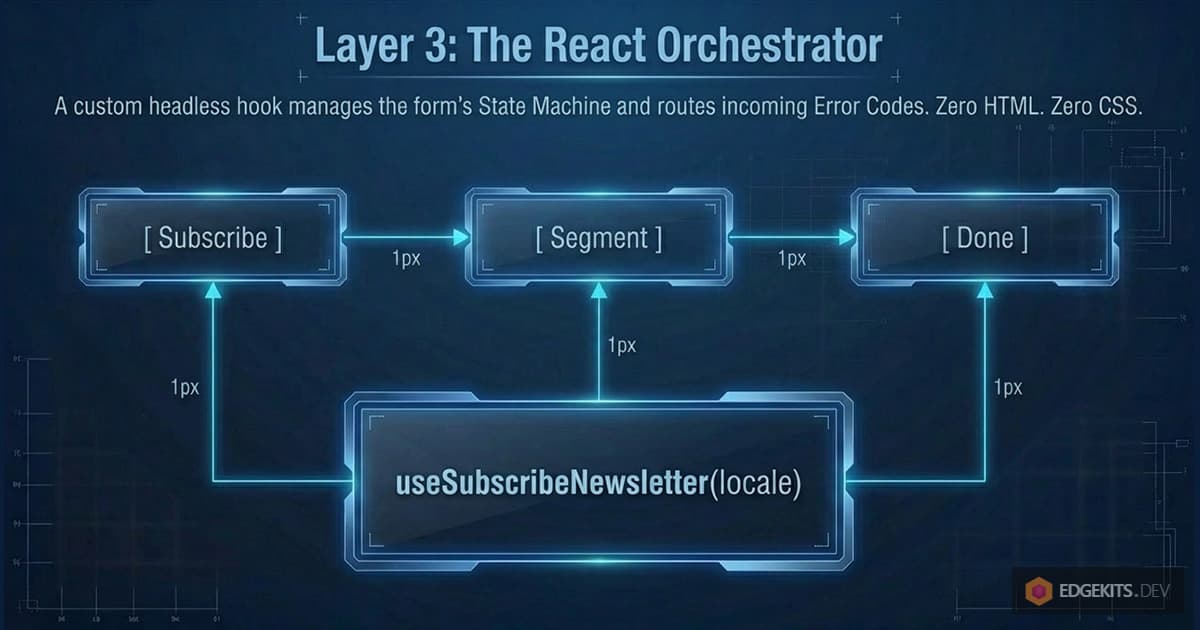

Reducing React Bundle Size: Lazy Loading & State

We now have a client that sends data and a server that safely returns strict Error Codes. How does the UI manage this communication flow without turning into a tangled mess of useEffect hooks?

We decouple the UI components from the business process by introducing a custom hook: useSubscribeNewsletter.

This hook acts as the Orchestrator. It doesn’t know anything about CSS or HTML. Its only job is to manage the form’s State Machine (subscribe -> segment -> done), communicate with the Astro Action, and route the Error Codes to the React components.

// src/hooks/useSubscribeNewsletter.ts

import { useState } from 'react'

import { actions } from 'astro:actions'

import { isErrorMessageCode, ERROR_MESSAGE_CODES } from '@/domain/messages'

export function useSubscribeNewsletter(locale: string) {

const [step, setStep] = useState<'subscribe' | 'segment' | 'done'>(

'subscribe',

)

const [pending, setPending] = useState(false)

const [actionError, setActionError] = useState<string | null>(null)

const [subscriberId, setSubscriberId] = useState<number | null>(null)

const subscribeAction = async (values: any) => {

setPending(true)

try {

const { data, error } = await actions.newsLetter.subscribe({

...values,

locale,

})

if (error) {

if (error.code === 'BAD_REQUEST') {

// Store the strict domain code (e.g., "EMAIL_ALREADY_EXISTS")

if (isErrorMessageCode(error.message)) {

setActionError(error.message)

return

}

// Fallback for an uncovered key

setActionError(ERROR_MESSAGE_CODES.INVALID_EMAIL)

return

}

throw error

}

if (data) {

setActionError(null)

setSubscriberId(data) // Save ID for the next step

setStep('segment') // Move state machine forward

}

} catch {

// We will handle global toast notifications here later

} finally {

setPending(false)

}

}

// segmentationAction omitted for brevity, but it follows the exact same pattern

return { pending, step, actionError, setActionError, subscribeAction }

}The Performance Hack: Lazy Loading

The State Machine pattern gives us a massive performance advantage.

Our subscription flow has two parts: asking for the email (Step 1), and asking for the user’s preferences via a complex SegmentationForm using heavy Shadcn Select and MultiSelect components (Step 2).

If we bundle all of this into one file, the client has to download the dropdown logic just to render a simple email input. Instead, we use React’s lazy feature right inside our main component, conditionally rendering UI based on the step variable:

// src/components/islands/NewsletterFlow.tsx

import { lazy } from 'react'

import { NewsletterForm } from '@/domain/forms/components/NewsletterForm'

import { useSubscribeNewsletter } from '@/hooks/useSubscribeNewsletter'

// 1. We load the heavy Segmentation form ONLY when the user reaches that step

const LazySegmentationForm = lazy(() =>

import('@/domain/forms/components/SegmentationForm').then((module) => ({

default: module.SegmentationForm,

})),

)

export const NewsletterFlow = ({ t, locale }) => {

const {

pending,

step,

actionError,

setActionError,

subscribeAction,

segmentationAction,

} = useSubscribeNewsletter(locale)

// Step 3: Success State

if (step === 'done') {

return (

<div>

<h2>{t.newsletter.subscribed.title}</h2>

<p>{t.newsletter.subscribed.description}</p>

</div>

)

}

// Step 2: Segmentation State (Lazy Loaded)

if (step === 'segment') {

return (

<div>

<h3>{t.newsletter.step.segment.title}</h3>

<LazySegmentationForm

t={t}

onSubmit={segmentationAction}

actionError={actionError}

setActionError={setActionError}

pending={pending}

/>

</div>

)

}

// Step 1: Initial Subscribe State (Rendered by default)

if (step === 'subscribe') {

return (

<div>

<div>{t.newsletter.step.subscribe.title}</div>

<NewsletterForm

t={t.messages}

source="landing-hero"

onSubmit={subscribeAction}

actionError={actionError}

setActionError={setActionError}

pending={pending}

/>

</div>

)

}

}Because our State Machine explicitly defines the step state, Webpack/Vite knows exactly when to request the next chunk of JavaScript.

The user loads the page, downloads almost zero JS, types their email, and clicks “Subscribe.” Only while the Astro Action is executing on the Cloudflare Worker does the browser silently download the chunk for the SegmentationForm.

This is how you achieve a 0ms Total Blocking Time (TBT) on the initial load while still building a rich, interactive SaaS application.

Now, we have a fully functioning flow that operates entirely on Domain Error Codes. The final piece of the puzzle is the Final Mile: transforming those codes into human-readable, localized text right before they hit the screen.

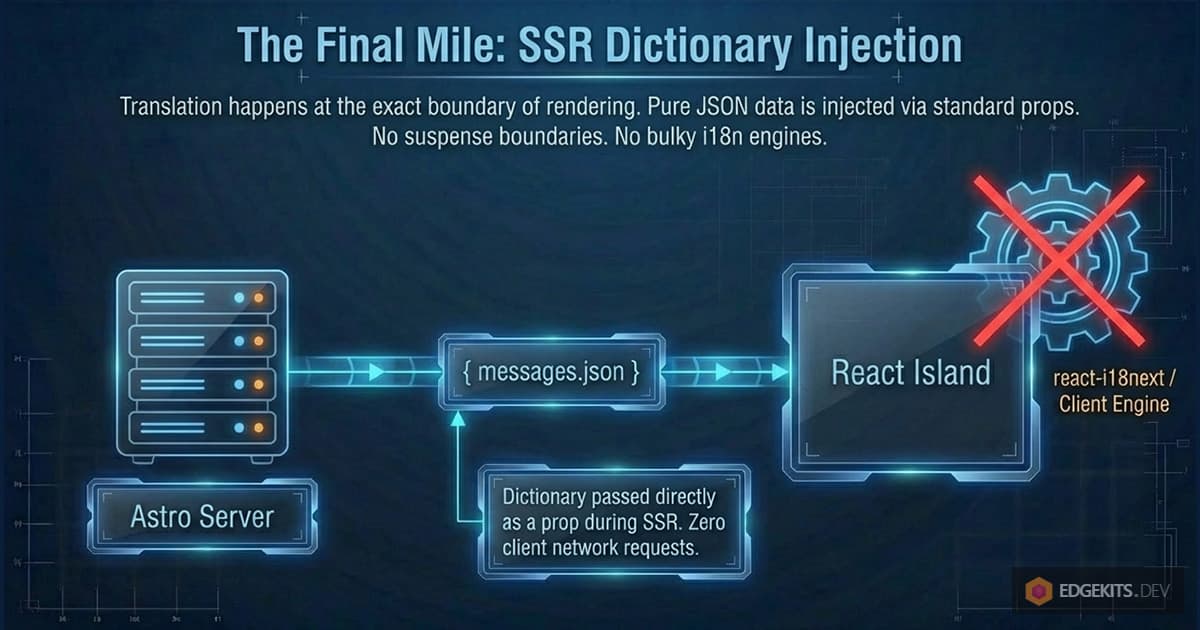

The Final Mile: React Hook Form Localization

We have successfully purged human-readable strings from our validation schemas and our server actions. Both Zod and Astro Actions now speak a universal, language-agnostic language of strict literal codes (like "INVALID_EMAIL" or "EMAIL_ALREADY_EXISTS").

But end-users don’t speak in literal codes. They need to see “Invalid email address” in English, or “Correo electrónico no válido” in Spanish.

If the domain logic is decoupled from the language, where exactly does the translation happen?

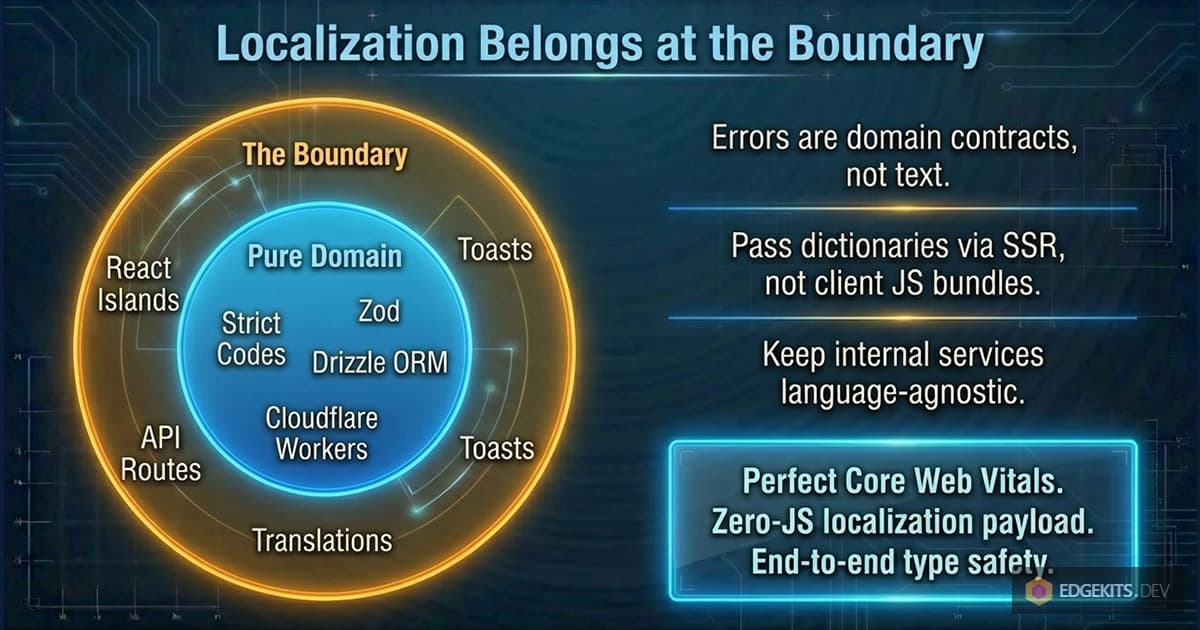

It happens at the very boundary of our application - at the exact moment of rendering the React UI. This is the Final Mile.

The Bridge: Passing Lightweight Dictionaries

Instead of bundling a heavy i18n library (like react-i18next) and initializing translation contexts inside our React tree, we treat translations as pure data.

When the Astro server renders the page, it fetches the necessary translation namespace (e.g., messages.json) from Cloudflare KV (or the Edge Cache) and passes it directly to the React Island as a standard prop.

// src/components/islands/NewsletterFlow.tsx (Simplified)

export const NewsletterFlow = ({ t, locale }) => {

// 't' is just a lightweight JavaScript object containing our localized strings

const { messages, newsletter } = t

return (

<NewsletterForm

// We pass only the specific dictionary needed for errors

t={messages}

onSubmit={subscribeAction}

actionError={actionError} // This holds our strict code from the server

// ...

/>

)

}This is the essence of Zero-JS React Hook Form localization. There are no network requests for JSON files from the client, no suspense boundaries, and no bulky i18n engines. The dictionary is just a POJO (Plain Old JavaScript Object) injected during Server-Side Rendering (SSR).

To make this completely clear, here is what that lightweight messages.json dictionary actually looks like:

// locales/en/messages.json

{

"errors": {

"ui": {

"INVALID_EMAIL": "Invalid email address.",

"EMAIL_ALREADY_EXISTS": "This email address already exists.",

"INTERESTS_REQUIRED": "Please select at least one product.",

"BILLING_OTHER_REQUIRED": "Please specify your billing provider."

},

"server": {

"INTERNAL_SERVER_ERROR": "Something went wrong. Please try again."

}

},

"common": {

"PENDING": "Please wait",

"SUBSCRIPTION_SUCCEED": "Thanks for your subscription!"

}

}Notice the keys in the ui object. They are not random strings; they exactly match the ERROR_MESSAGE_CODES we defined in our domain contract. This is the missing link that ties the backend validation directly to the UI translation without any intermediary mapping logic.

The Component: FieldErrorLocalized

Inside our form, we need a component that knows how to read our domain codes and translate them using the provided dictionary.

Let’s look at the anatomy of <FieldErrorLocalized />. It receives the error state from react-hook-form (which originates from Zod) and the error state from our Action (which originates from the D1 database).

// src/ui/forms/FieldErrorLocalized.tsx

import { FieldError } from '@/components/ui/field'

import { mapErrorsToI18n } from './error-mapper'

type ErrorLike = { message?: string }

interface FieldErrorLocalizedProps {

fieldError?: ErrorLike // Error from Zod (react-hook-form)

actionError?: string | null // Error from Astro Action

tErrors: Record<string, string> // Our lightweight dictionary

className?: string

}

export function FieldErrorLocalized({

fieldError,

actionError,

tErrors,

className,

}: FieldErrorLocalizedProps) {

if (!fieldError && !actionError) return null

// We map the domain codes to actual localized strings

const errors = mapErrorsToI18n(

[fieldError, actionError ? { message: actionError } : undefined],

tErrors,

)

if (!errors.length) return null

// We render the standard Shadcn UI FieldError component

return <FieldError errors={errors} className={className} />

}The component is entirely dumb. It doesn’t know what language the user selected. It simply delegates the translation to a pure mapping function.

The Pure Mapper

Here is the function that performs the actual translation. Because Zod and our Actions both output the domain code inside the message property, the mapping logic is beautifully simple:

// src/ui/forms/error-mapper.ts

type ErrorLike = { message?: string }

/**

* Maps multiple error-like objects (containing domain codes) to localized messages.

*/

export function mapErrorsToI18n(

issues: Array<ErrorLike | undefined>,

tErrors: Record<string, string>,

): ErrorLike[] {

return issues

.map((issue) => {

// 1. Check if an error exists

if (!issue?.message) return undefined

// 2. Use the domain code (e.g., "INVALID_EMAIL") as a key in the dictionary

const localized = tErrors[issue.message]

// 3. If no translation is found, we don't render an empty string

if (!localized) return undefined

// 4. Return the translated string back in the expected format

return { message: localized }

})

.filter(Boolean) as ErrorLike[]

}The Result

By isolating localization to the very edges of the UI:

- Forms are decoupled: They don’t know about i18n or Zod. They just pass errors down.

- The Domain is clean: Zod schemas and Astro Actions use strict, type-safe literal codes.

- The Bundle is tiny: We completely eliminated the need for

zod-i18n-mapand any client-side localization engines. The user downloads only the exact strings needed for the current screen.

This architecture scales perfectly. Whether you add toast notifications, global process errors, or new languages, the core domain logic remains untouched, and the client performance remains at a perfect 90+.

Process Errors and Global Toasts

So far, we have covered Field-level errors - issues like a typo in an email that should be displayed directly under the input field.

But what about Process-level events? If the database connection drops unexpectedly, or if the user successfully completes the subscription flow, we need to provide global feedback. In modern UI design, this is usually handled by a Toast notification library (like Sonner).

Because our entire architecture speaks in strict domain codes, integrating localized toasts is incredibly clean. We don’t want our useSubscribeNewsletter hook to import translation libraries or know about UI components. Instead, we use the Inversion of Control principle and pass simple callbacks.

Let’s update our orchestrator hook to accept success and error callbacks:

// src/hooks/useSubscribeNewsletter.ts (Updated)

import { useState } from 'react'

import { actions } from 'astro:actions'

import {

COMMON_MESSAGE_CODES,

ERROR_MESSAGE_CODES,

isErrorMessageCode,

type CommonMessageCode,

type ServerErrorCode,

} from '@/domain/messages'

export function useSubscribeNewsletter(

locale: string,

options: {

onSuccess: (code: CommonMessageCode) => void

onServerError: (code: ServerErrorCode) => void

},

) {

// ... state initialization

const subscribeAction = async (values: any) => {

// ... validation logic

try {

// ... action call

} catch {

// Server / network / unexpected errors

options.onServerError(ERROR_MESSAGE_CODES.INTERNAL_SERVER_ERROR)

}

}

const segmentationAction = async (values: any) => {

// ... validation logic

try {

const { data, error } = await actions.newsLetter.segment({

/*...*/

})

// ... error handling

if (data) {

setActionError(null)

setStep('done')

// Trigger the success callback with a strict domain code

options.onSuccess(COMMON_MESSAGE_CODES.SUBSCRIPTION_SUCCEED)

}

} catch (error) {

options.onServerError(ERROR_MESSAGE_CODES.INTERNAL_SERVER_ERROR)

}

}

return {

/* ... */

}

}Now, back in our NewsletterFlow component (our Island wrapper), we provide those callbacks. Since the wrapper already received the lightweight JSON dictionary via props during SSR, it can instantly translate the domain code and fire the toast notification.

// src/components/islands/NewsletterFlow.tsx

import { toast } from 'sonner'

import { useSubscribeNewsletter } from '@/hooks/useSubscribeNewsletter'

import type { ServerErrorCode, CommonMessageCode } from '@/domain/messages'

export const NewsletterFlow = ({ t, locale }) => {

const { messages } = t

const {

// ...

subscribeAction,

segmentationAction,

} = useSubscribeNewsletter(locale, {

// We receive the domain code and map it directly to our dictionary

onSuccess: (code: CommonMessageCode) =>

toast.success(messages.common[code]),

onServerError: (code: ServerErrorCode) =>

toast.error(messages.errors.server[code]),

})

// ... render logic

}(Note: In a future deep-dive, we will explore how to optimize these interactive islands even further using custom client:interaction directives in Astro to delay loading the Toast library until it’s actually needed. But for now, standard hydration works perfectly).

And there you have it. A complete, end-to-end interactive flow that handles complex Zod validation, server-side Astro Actions, lazy-loaded components, and global toast notifications - all fully localized, fully type-safe, and without shipping a single megabyte of translation engines to the client.

API Routes, Webhooks & Internal Microservices

Throughout this article, we’ve relied heavily on Astro Actions for frontend-to-backend communication. In my practice, Actions are the undisputed king for UI interactions because they provide end-to-end type safety out of the box.

I use standard Astro API Routes (src/pages/api/) almost exclusively for external integrations: payment webhooks (Stripe, Paddle, LemonSqueezy), 3rd-party callbacks, or Telegram bot endpoints.

But as your SaaS scales, you will likely offload heavy background tasks to separate, internal Cloudflare Workers via Service Bindings (which allow workers to communicate with zero network latency).

Whether your boundary is an Astro Action serving a React form, or an Astro API Route serving a Telegram bot webhook, the architectural rule remains identical: Localization at the Boundary.

Imagine you have a Telegram Bot API Route that processes a subscription via an internal Billing Worker. Should that internal worker know the user’s language or import dictionaries?

Absolutely not.

Internal microservices and domain logic must remain strictly language-agnostic. They communicate exclusively via machine-readable domain codes ("INSUFFICIENT_FUNDS"). It is the responsibility of the API Route (the absolute boundary facing the external world) to intercept this code and translate it right before responding:

// src/pages/api/webhooks/telegram.ts

import type { APIRoute } from 'astro'

import { fetchTranslations } from '@/domain/i18n/fetcher'

export const POST: APIRoute = async (context) => {

const payload = await context.request.json()

// 1. Identify the external user's preferred language (e.g., 'es')

const userLang = payload.message?.from?.language_code || 'en'

// 2. Call internal language-agnostic service (e.g., via Cloudflare Service Binding)

const result = await context.locals.runtime.env.BILLING_SERVICE.chargeUser(

payload.user_id,

)

if (result.error) {

// result.error is a strict domain code like "INSUFFICIENT_FUNDS"

// 3. Fetch the dictionary specifically for this external user

const { messages } = await fetchTranslations(

context.locals.runtime,

userLang,

['messages'],

)

// 4. Translate at the boundary

const text =

messages.errors.billing[result.error] ||

messages.errors.server.INTERNAL_SERVER_ERROR

// Send localized response back to Telegram API

await sendTelegramReply(payload.chat.id, text)

return new Response('OK')

}

return new Response('OK')

}By pushing localization to the extreme edges of your architecture (React Islands for the UI, and API Routes for external consumers), your internal services remain lightweight, highly cacheable, and infinitely easier to test.

Cloudflare D1 & Drizzle ORM Localization (UGC)

The final boss of internationalization is dynamic data. Translating static UI strings like “Submit” is simple, but what about data created by your users? If you are building a multi-tenant SaaS, your users might create product categories or pricing tiers that need to be localized.

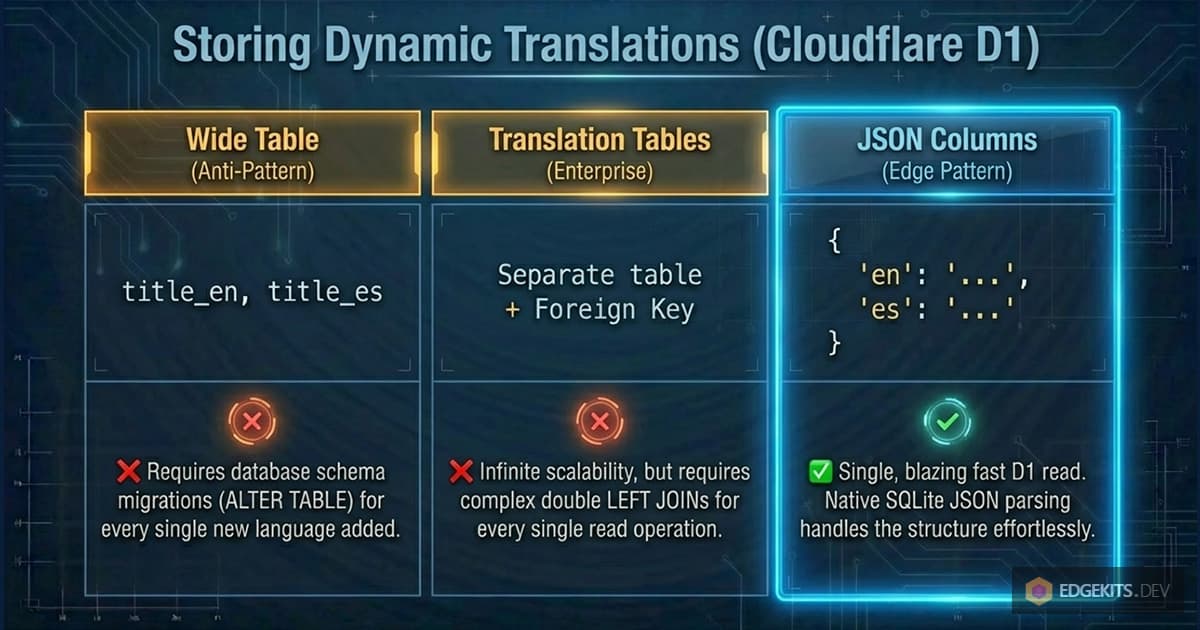

How do you store this in Cloudflare D1 using Drizzle ORM? Let’s look at the trade-offs of the three standard approaches.

1. The Anti-Pattern: The “Wide Table”

The most common beginner mistake is adding language-specific columns to the main table:

// ❌ The Wide Table Anti-Pattern

export const products = sqliteTable('products', {

id: integer('id').primaryKey(),

title_en: text('title_en'),

title_es: text('title_es'),

title_de: text('title_de'),

})This is an architectural dead end. Every time marketing asks to support a new language (e.g., French), you have to run a database migration (ALTER TABLE), update your Drizzle schema, and redeploy the backend.

2. The Enterprise Pattern: Translation Tables

The strict relational approach is to separate the core entity from its translations using a one-to-many relationship.

// ✅ The Relational Pattern

export const products = sqliteTable('products', {

id: integer('id').primaryKey(),

price: integer('price'), // Language-agnostic data

})

export const productTranslations = sqliteTable('product_translations', {

id: integer('id').primaryKey(),

productId: integer('product_id').references(() => products.id),

locale: text('locale').notNull(), // 'en', 'es', 'de'

title: text('title').notNull(),

})Pros: Infinite scalability. Adding a new language is just inserting a new row, not modifying the schema.

Cons: It requires JOINs for every read query.

To implement Graceful Fallback (the “Split-Brain” logic we discussed in Part 1) in pure SQL, you would perform a double LEFT JOIN - once for the requested uiLocale, and once for the default fallback locale (e.g., 'en'). You then use COALESCE(es.title, en.title) to let the database automatically decide which string to return.

3. The Modern Edge Pattern: JSON Columns

Because Cloudflare D1 is built on SQLite, it has fantastic (and blazingly fast) support for JSON functions. For read-heavy Edge applications, we can leverage this to avoid JOINs entirely.

// 🚀 The Edge Pattern (NoSQL in SQL)

export const products = sqliteTable('products', {

id: integer('id').primaryKey(),

// Drizzle handles the JSON parsing automatically

translations: text('translations', { mode: 'json' }).$type<

Record<string, { title: string }>

>(),

})The stored JSON looks like this:

{

"en": { "title": "Shoes" },

"es": { "title": "Zapatos" }

}Why this wins on the Edge: You retrieve the entire entity with a single, fast D1 read. There are no complex SQL joins. The Graceful Fallback logic is handled cleanly in your TypeScript DomainContext:

// Handled cleanly in the Domain Services layer

const localizedTitle =

row.translations[uiLocale]?.title ?? row.translations['en'].titleFor most SaaS use cases on Cloudflare Workers, this JSON-column approach hits the perfect sweet spot between developer experience, database performance, and schema flexibility.

Conclusion

Internationalization is rarely a feature you can just “bolt on” at the end of a project. When you treat translations as massive JavaScript bundles that must be downloaded, parsed, and executed by the client’s browser, you are fundamentally crippling your application’s performance.

By inverting the control - by treating errors as strict domain codes, resolving languages in Astro Middleware, and isolating translations to the absolute Edge of your architecture - you achieve something rare. You get a fully localized, type-safe, complex interactive React application that still ships with a Zero-JS localization payload and perfect Core Web Vitals.

This isn’t just theory. This is exactly how we built EdgeKits.

Get the Code & Stay Updated

You don’t have to build the foundation from scratch. While the advanced Zod mapping, state-machine orchestrators, and DomainContext patterns we discussed today are specific to our production application, the underlying Edge-native i18n architecture - including the Astro middleware, caching logic, and Split-Brain fallback - is available in our open-source starter kit.

👉 Star the Repo to support the project: Astro EdgeKits Core.

If you found this deep dive valuable, we are currently building production-ready SaaS and Telegram Mini App starter kits based on this exact architecture (which will include all these advanced domain patterns out of the box).

Join the Early Birds list (using the form below or in the hero section) to get launch updates, release notes, and early-bird pricing. No spam, ever.